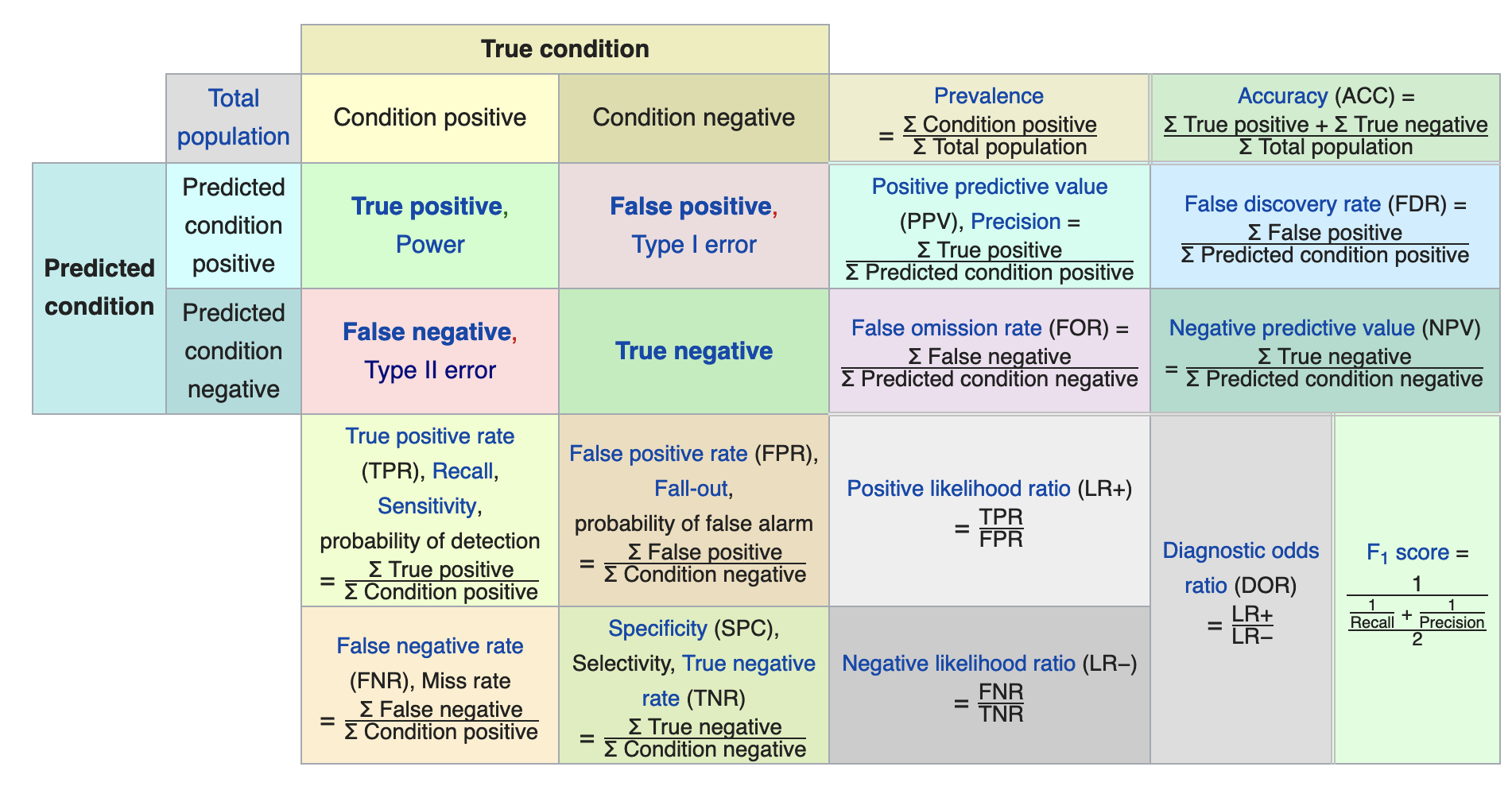

It’s calculated as the number of incorrect predictions over all the numbers of predictions made by the model.Įrror Rate = (FP + FN)/ (TP + FP + FN + TN) 6. Determine the Misclassification RateĪlso referred to as the error rate, the misclassification rate describes how often the classifier yields the wrong predictions. It is calculated using the following formula:Īccuracy = (TP + TN)/ (TP + FP + FN + TN) 5. It can be calculated as the ratio of the number of correct predictions and the total number of predictions made by the classifiers. The classification accuracy rate measures how often the model makes a correct prediction. The “true negative” and “false negative” values are the actual negative results, while the “true positive” and “false negative” values are the actual positive outcomes. These actual outputs become the “true” and “false” values in your tables. Now, enter the actual values in the matrix. If you want to predict the number of correct and incorrect answers from a data set that contains 50 questions, you can have two outputs, either “correct” or “incorrect.” If you predict 40 questions correct and 10 questions incorrect, you enter these values as the outputs in the columns for your predictive “correct” and “incorrect” values. Enter the Predicted Valuesįill the chart with the data. You can set your table with the predicted values on the right side, and the actual values on the left side. To get started, construct a table with two columns and two rows, with an additional column and row for labeling your chart. Now that you have an idea of what a confusion matrix is, let’s look at the basic process of calculating confusion matrices for binary classification problems. How to Create and Calculate a Confusion Matrix in Eight Steps False Negative (FN): Also known as Type-II error, an FN prediction is negative, but the actual value was positive.False Positive (FP): Also known as Type-I error, an FP prediction is positive, but the actual value was negative.True Negative (TN): Also known as specificity, TN means that a negative prediction was given, and it was true.True Positive (TP): Also known as sensitivity, TP means that a positive prediction was given, and it was true.Each table consists of four cells, each representing a unique combination of predicted and actual values. Outcomes of a Confusion MatrixĪ confusion matrix helps measure performance where an algorithm’s output can be in two or more categories-typically positive or negative, yes or no. Confusion matrices are an effective tool to help data analysts evaluate which functions an ML model performs well, and which it performs not so well. Let’s get started! What Is a Confusion Matrix?ĭon’t worry-the confusion matrix isn’t as complex as the name makes it seem.Īlso known as an error matrix, a confusion matrix is a table that helps you visualize a classification model’s performance on a set of test data for which the actual values are known. In this guide, we’ll explore how to build a confusion matrix and the potential value it can contribute to your business. With the help of a confusion matrix, you can measure the factors affecting your classification model’s performance, precision, and accuracy-enabling you to make smarter, more informed decisions.

So, how do you go about understanding a classification algorithm’s performance so that you can better understand its results? And in business, a high error rate can potentially cost an organization millions of dollars. Unfortunately, algorithms aren’t accurate 100% of the time. In a perfect world, we’d all take our perfectly clean data, feed it to a machine learning model, and get amazing results.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed